How to analyse a usability test

Laborious as it may be, proper analysis is a worthwhile step that can maximise the value of usability tests.

Reasons for user testing

There are many different reasons for testing an experience, such as:

- To discover use cases in order to understand underlying user needs and how to best address these

- Learn what users like and don't like about a product or service

- Learn how your interface is being used to better understand your user flows

- To facilitate a broader conversation about your product/service (perhaps to do a task analysis or journey mapping exercise)

- Get feedback on a concept or idea

- Explore the impact of one or more design changes on the overall user experience

- Find out whether any usability issues exist

Your reason for testing will shape your research objectives. Your research objectives will, in turn, determine how you analyse your user test. I discuss this further in my article on setting research objectives and mapping assumptions for user research.

Because the reason for the user test ultimate influences your analysis it would require a deep dive for each type of user test. For this article I am going to focus exclusively on testing for usability issues.

Strong planning makes for better analysis

You may have a specific reason to suspect usability problems (e.g. based on analytics, customer feedback, or a recent redesign). Or you may simply want to conduct an open-ended exploration of the user experience. Whatever your motivation for testing you will be limited in how much time you can spend testing everything.

In preparing for your user test, you should already have a general sense of the tasks that your users typically complete. You can learn what these tasks are through other research (e.g. observations, diary studies, user interviews, user tests). For this article I will assume that you know the tasks in advance.

Once we know what tasks we want to test we reach a fork in the road:

- You can recruit users who currently need to complete the task(s) you are testing

- You can recruit any suitable users and give them scenarios to test

Either way, you will want to list the possible tasks in your test plan. That way if you go with option (1) you can ask participants to circle back to a task even if it's not part of their process. Sometimes even if you take option (2) you might need to keep the scenarios fairly generic (for example: "Imagine you want to buy a new phone on a monthly plan" without specifying which phone or which plan). In either case having a list of tasks means that even if your participants won't naturally complete every task you can ask them to do so for the sake of the test.

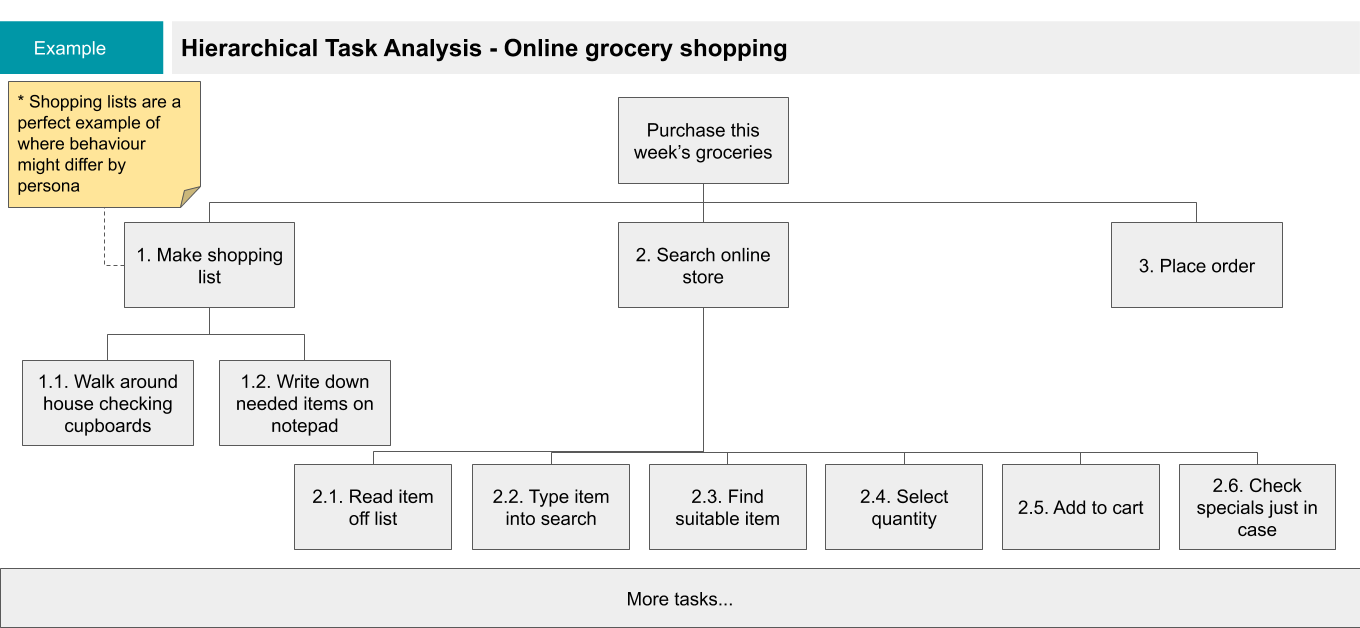

A Hierarchical Task Analysis can be useful here. You want to separate out tasks related to the experience (e.g. searching for a product on a store) from the actual tasks your users need (e.g. purchase a product that they need). It's important that you understand the coverage of your user test to know what parts of the experience have not been tested.

An open-ended user test has one key advantage: your research participants are more likely to behave “naturally”, using the interface as they normally would. But the more open-ended your test plan, the higher your chance of running into these issues:

- Your small sample size means that you miss out on testing certain parts of the experience

- Note taking is more difficult as users may jump around in unexpected ways (either by annotating the transcript or by taking notes against the relevant section)

- You are more likely to take lighter notes (with fewer observations) on parts of the experience that are brief

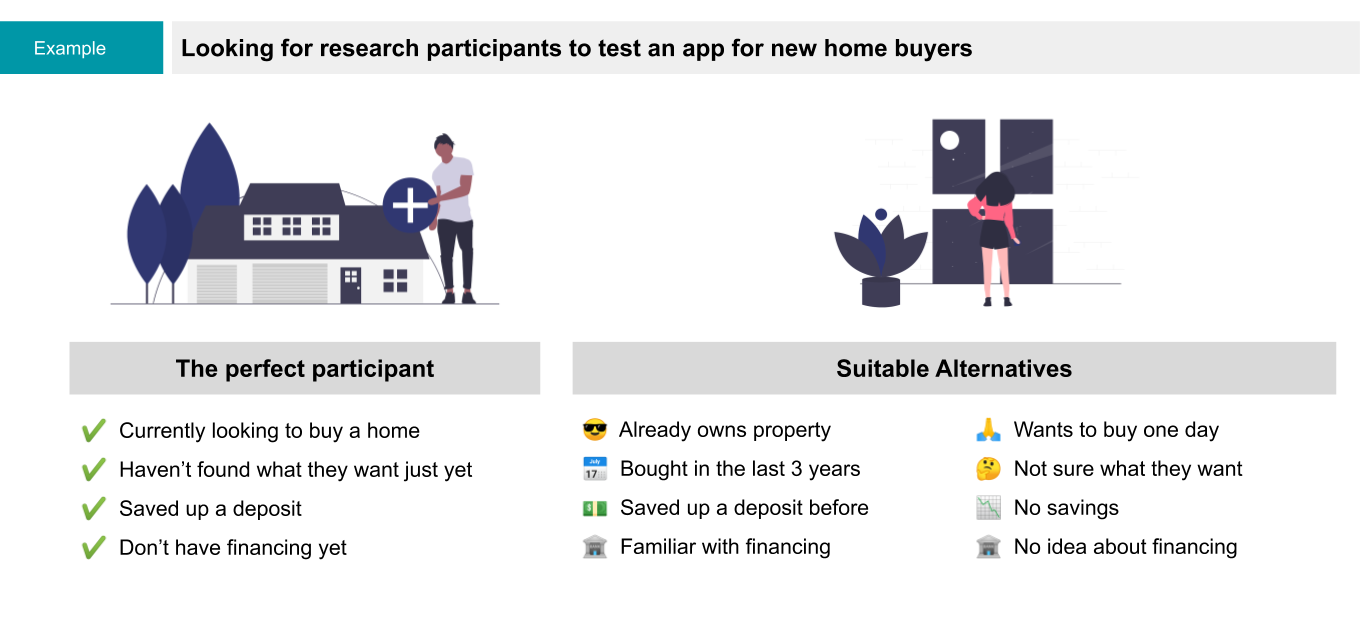

- It's (usually) harder to recruit participants at the exact time that they need to complete the desired task(s) (e.g. considering trying to find a participant looking to buy a house to test an app for home buyers)

On the other hand having a scenario based user test can lead to "unnatural" behaviour which can mean:

- You miss out on seeing behaviours that would emerge if the participants received no instructions

- Your instructions provide hints (e.g. through the words you choose) that could hide a usability issue

- Participants might take a task less seriously and make choices they other wise would avoid

It's important to keep these considerations in mind when writing your test plan. The tasks that you cover (and the way that you cover them) will affect the research notes that you capture and ultimately your analysis.

Capturing notes during a user test

User testing is one of those times where a transcript is not as useful as well structured notes. Though if you are taking notes you should still try to capture what participants say verbatim.

If you use a transcript you may be tempted to use a 3rd party transcription service. For a user test this can be problematic because the scribes aren't likely to include observations. These might be simple observations like what page/section/screen a user is on or more interaction related such as what users click or tap on (or fail to click or tap on).

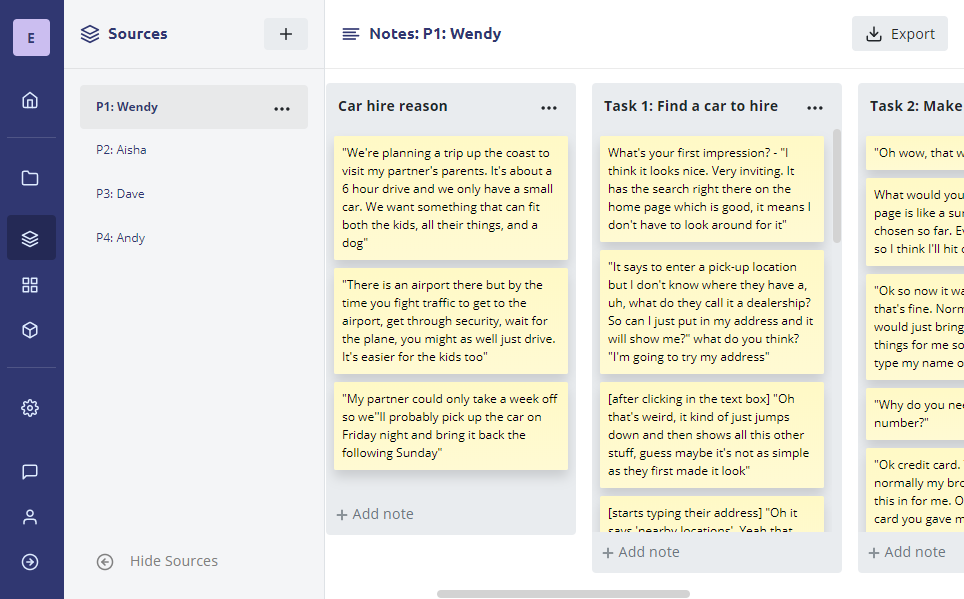

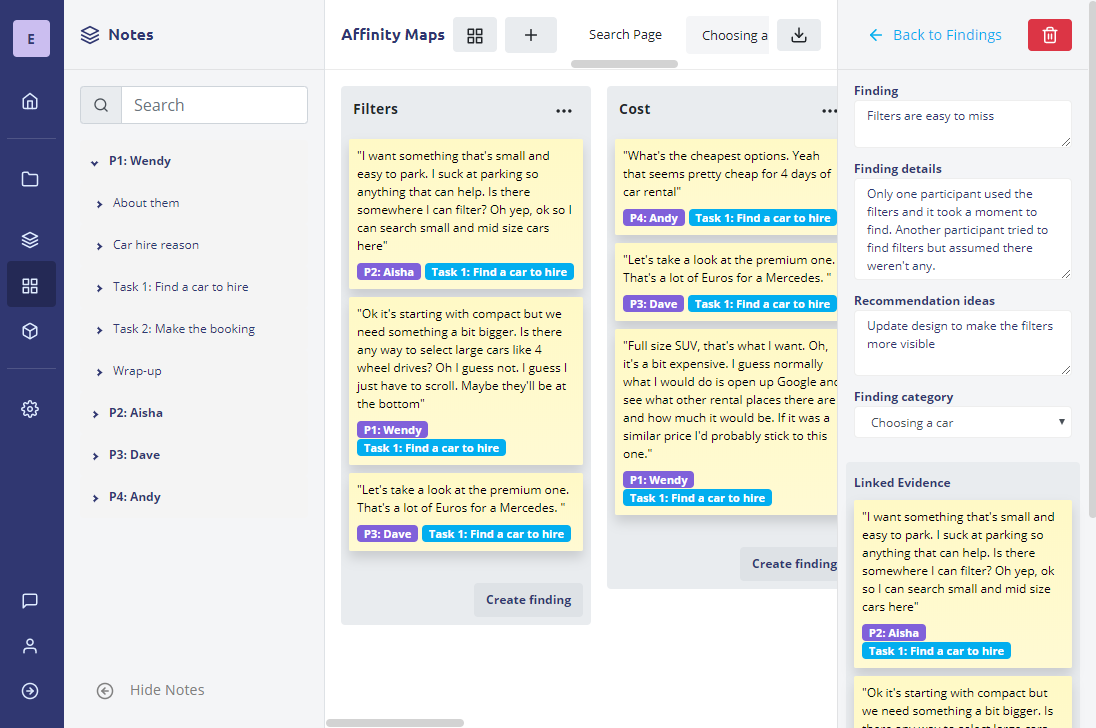

In this screenshot, we see an example of usability testing notes captured using our Evolve Research App. Rather than a transcript, you take notes against the relevant task in the mod-guide. In this example the notes are for usability testing a car hire website. This is effective because the process of hiring a car follows a short and consistent process.

Another approach would be to take notes based on which screen the user is on. This could be useful for an app or website where a particular task may span multiple screens, especially if multiple tasks can reference the same screen.

Either way the advantage of taking structured notes is that it greatly simplifies your analysis.

Affinity Mapping for usability issues

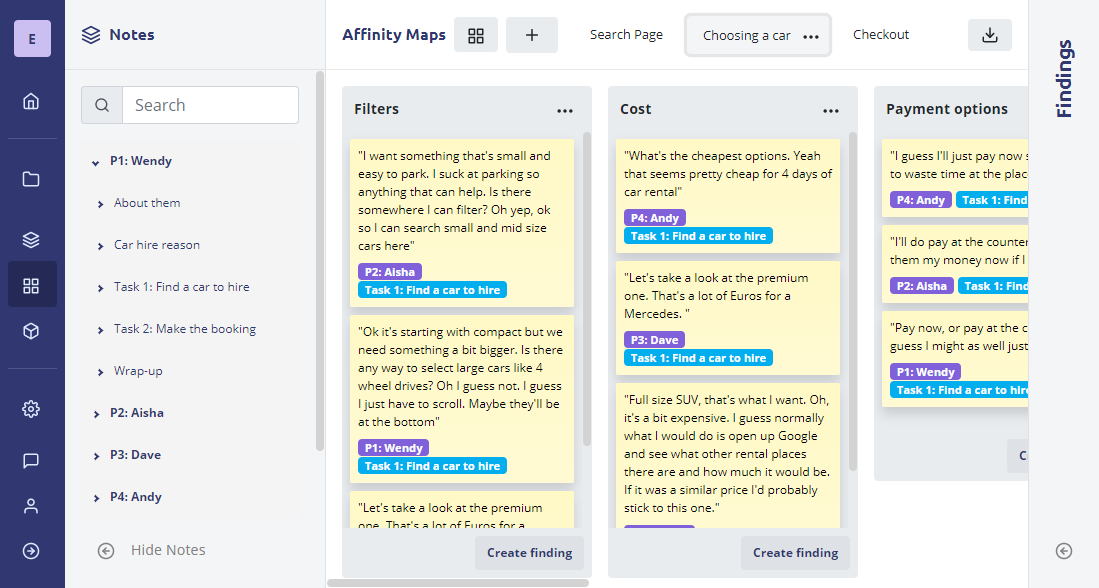

The fastest (and most effective) approach is usually to structure your analysis the same way that you structured the notes. So if the notes are structured around tasks you should create an affinity map for each task. If the notes are per screen (or page) you should have an affinity map for each screen.

Note: It's important to be flexible. You might have structured your notes around tasks but find that participants jump back and forth between screens. This would make it hard to give recommendations per-screen. On the other hand you may structure your notes per screen but find that usability issues are related to the task rather than the capabilities of the screen.

In this screenshot we can see the need to be flexible with how we approach our analysis. Each participant has notes against "Task 1: Find a car to hire" but that task was split over 2 pages: search and search results. In this case it was more effective to split the analysis to each screen to make it easier to spot trends between participants. This is also useful because we likely want to report on the usability issues on a per-screen basis.

The most important thing to keep in mind is don't do your affinity mapping on a per-participant basis. Instead you should review each task/screen one by one across participants. If you go through every participant one-by-one then the notes from one participant will no longer be salient when you look at the same section for the next participant.

Finding patterns and themes

A first step for finding usability issues is to group notes very broadly. In the screenshot above there are comments from multiple participants about filters. If you find that there are too many notes in one theme then you can consider splitting them further.

Your first pass of the analysis should simply involve putting all of the related notes together. So if there are comments or observations about filters they all go into one theme.

Complete every task, screen, or section for every participant before moving on to the next part of the analysis. In fact it can help to leave some time between doing your first pass of the analysis and looking for findings. Personally I've found that a good nights sleep helps me approach the analysis with the best headspace. But don't wait too long between grouping notes into themes and identifying research findings! Human memory is fragile. You may find that you need to spend more time reviewing your themes if you wait too long to finish your analysis.

Turning patterns into findings

This is where the value of a deep analysis starts to come in. You may be tempted to simply take notes about usability issues as you watch the test (or the recording). And that fast analysis approach is great if you are testing a small number of tasks with very few participants. But even then you might miss the bigger picture.

Note: It's alright for some issues or observations to apply to only one participant. We're dealing with small sample sizes. That one participant may represent a significant portion of your user base. You need to use your judgement about the significance of the issue based on HOW it affects the participant's experience.

Once you have an overview of the experience across each task it's time to start drawing conclusions. In my article on analysis methods for user interviews I mentioned that we need to find a balance between rigour and speed. We deal with sample sizes that are too small to make confident predictions based on the number of participants that experience usability issues. Instead we need to combine evidence from multiple pieces of research together with our judgement to reach suitable conclusions.

For example: if filters on a page are easy to miss we may recommend that we change the design so that users are more likely to find them. But suppose most users are easily able to find what they are looking for even though they can't find the filters. In that case we have to think about the potential consequences of making one part of the design more visually prominent (e.g. it may break the visual hierarchy of the page and actually make it harder to use).

When working with small sample sizes most of our insights (and recommendations) will be based on assumptions. Rather than conducting randomised controlled trials for every design change we can just iterate quickly. With quick iteration we can work out if a previous design change had an undesirable outcome in future usability tests.

Obviously the approach of fast analysis based on assumptions won't work in every scenario. You need to be a lot more rigorous if you are working with hazardous materials, dangerous machinery, healthcare, or any other area that has a direct impact on the wellbeing of others.

Making Recommendations

For some research findings you may be able to come up with a recommendation on the spot. For others you may need to spend some time thinking about it before you can work out what to do. However at this stage of the analysis you should not be making detailed design recommendations unless they are incredibly obvious. It's better to keep your recommendations at a high level - things to consider evaluating rather than designs that definitively need to change.

It's worth doing a pass over each of the findings and writing recommendations or reviewing your on-the-spot recommendations from before. It may also be worth noting the severity of the finding and the scale of the recommendation. Especially if you're in an agile environment it might be worth coming up with smaller scale fixes to particularly significant issue.

The benefits of a deeper analysis

In the UX world we constantly have to strike a balance between research rigour and product development speed. While you spend time deciding whether or not fix a usability issue you have users out there experiencing that very issue. On the other hand you don't want to miss an issue because you failed to do a proper analysis - or worse do a shallow analysis and make a bad recommendation that causes more problems down the line.

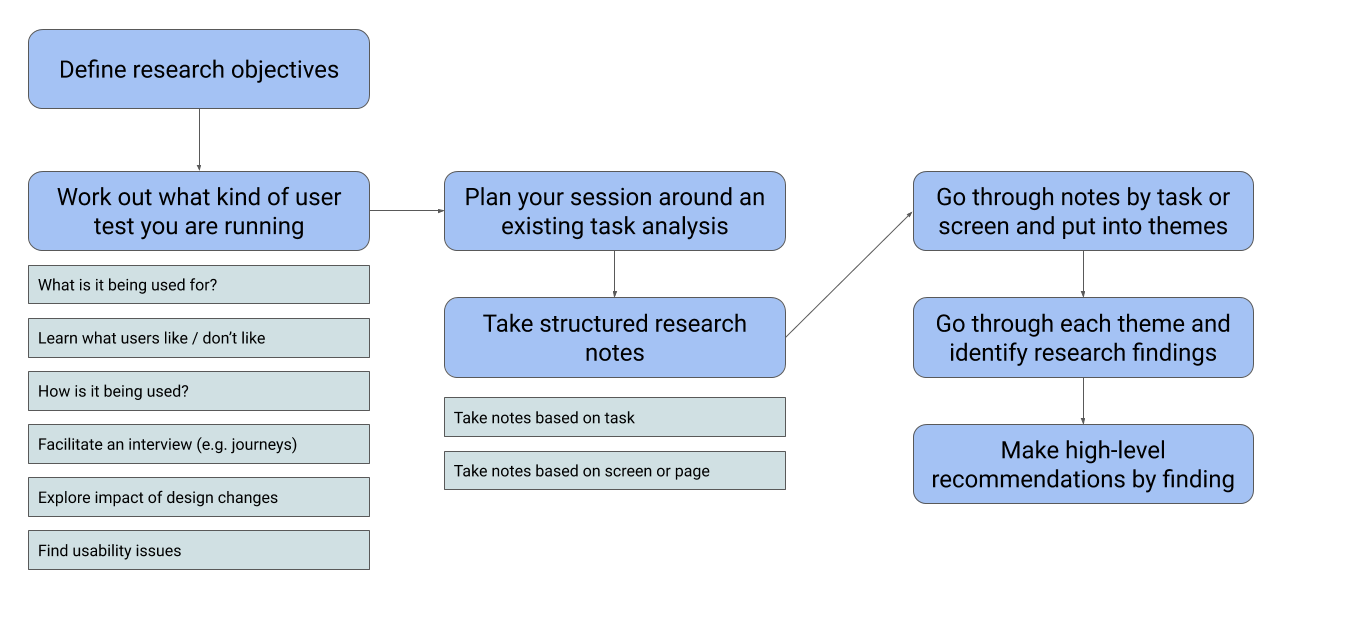

The method we propose tries to strike that balance. Here is a summary:

- Work out what kind of user test you need to do (based on your research objectives)

- Plan the analysis based around tasks (and consider whether or not your test will be open ended or follow specific scenarios)

- Take your research notes either against a task or against screens/pages

- Do a first-pass analysis by putting relevant notes into themes (with a separate affinity map for each task or screen)

- Look through each of the themes and identify research findings based on patterns you see across participants (it's also OK if some findings correspond to only one participant)

- Go through the findings and make recommendations on what (if anything) to do about them

With this approach you can add some rigour to your usability analysis while still moving at a fast pace. It won't be as quick as making snap judgments while you take your notes but it will be more robust.

Want to use Evolve to analyse your usability tests? We recently added a how-to guide on how to use Evolve for usability tests.

Back to blog